Where to find best historical data for ai search

Let’s cast aside the notion that quantity trumps quality in data science. An ocean of historical data does exist, but diving into its depths, we find not every treasure is as glittering as it appears. Much of this data falls short to fuel AI search, overlaid with irrelevance or low quality.

The true gem is the ‘elite’ historical data – a well-curated, structured subset that enhances AI’s comprehension of context, subtlety, and evolving information. In this article, we will embark on a quest for such elite data – identifying it, sourcing it, and most importantly, leveraging its power in unearthing valuable insights for AI search engines.

Characteristics That Set Apart ‘Elite’ Historical Data

So what paints historical data as ‘elite’? It’s not just about being old or voluminous. Picture a sprawling archive, packed with seemingly boundless records. Yet, without the essential traits of contextual richness, temporal accuracy, semantic consistency, and domain specificity, it’s akin to a vast wilderness, where treasure isn’t easily found – or understood.

Consider the difference between a well-indexed archive of academic journals and a jumbled compilation of random forum posts. The former offers a methodical dive into specialized knowledge, each entry tagged with meticulous precision, boosting the AI’s contextual understanding. The latter, although teeming with experiential wisdom maybe, mostly phases into irrelevant chatter, blurring the AI’s ability to extract value.

In a similar vein, financial reports significantly outshine social media sentiment when training AI for monetary market analysis. The formal text in those reports bears high semantic consistency, aiding the AI in making sense of industry jargon and intricate operations. On the contrary, deciphering the colloquial language and emojis prevalent on social media platforms might mislead the AI, causing it to map inaccurate predictions.

Thus, generic web scrapes or unstructured archives often fall short. Their inadequacy doesn’t lie in their content, but in the lack of structure, which makes these data sources akin to wild forests rather than organized libraries. Identifying ‘elite’ data means switching torchlight towards such structured, contextually rich, and domain-specific sources. They are the friendly guides in our quest for superior AI search engine performance.

Grasping the True Essence: Semantic Depth Over Keywords

Let’s bust a common myth that swirls around AI search – the belief that keywords act as the magical keys to unlock the treasure troves of AI comprehension. The reality, however, paints a more nuanced picture. Elite historical data lends AI a much-awaited semantic profundity, and that’s what allows it to grasp much more than mere words. It learns to decode the implicit intent, to understand the intricate network of relationships, and to comprehend vast arrays of concepts. This extended capability enables AI to tackle complex inquiries and deliver results that perfectly align with the searcher’s intent, rather than blindly matching search terms.

Now, imagine you’re searching for ‘best historical data for AI search.’ A keyword-driven search might take you to general sources offering historical data, while a semantic-leaning AI would understand your specific intent – acquiring top-notch historical data tailored for AI search use. It dives deeper into the meaning, considering ‘best’ as the need for high-quality, ‘historical data’ as domain-specific, archival information and ‘AI search’ as the ultimate application. That’s how meaningful, finely-tuned results make their way to you, demonstrating the true power of semantic understanding in refining search outcomes.

Top-tier Sources: Where to Find Premium Historical Data

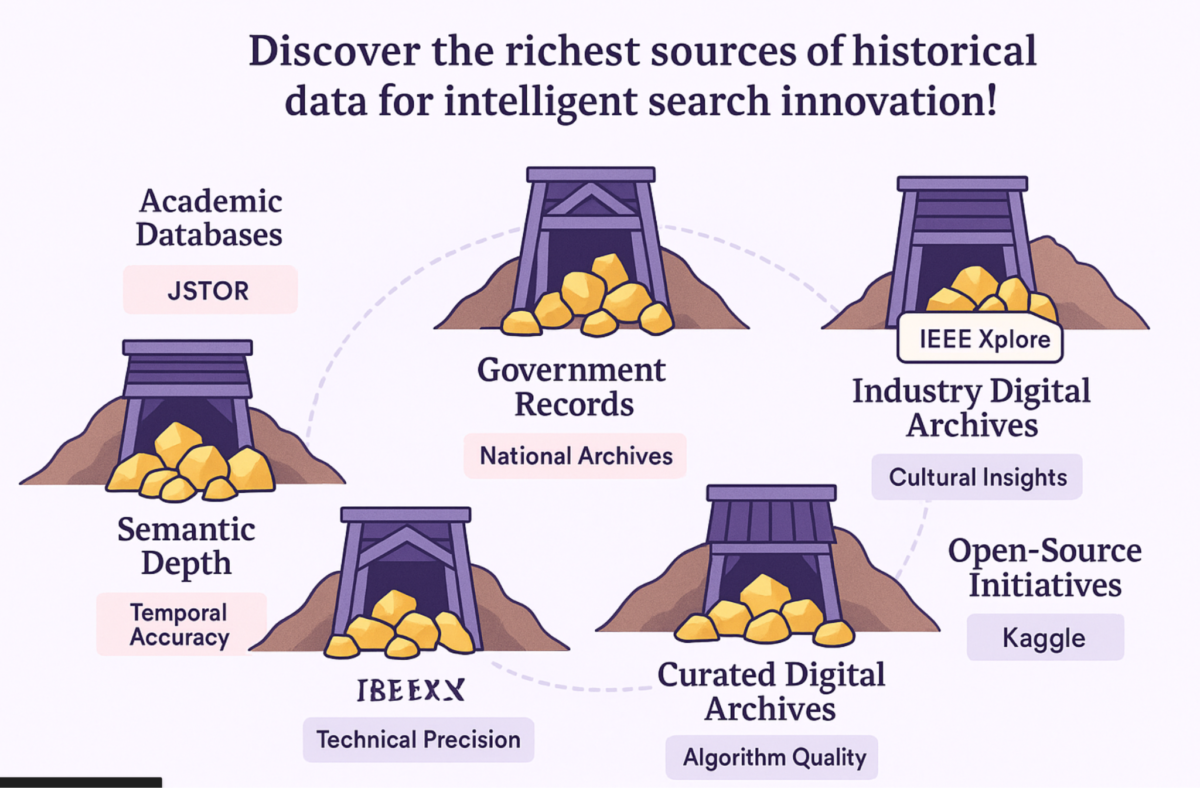

Looking for the perfect data to boost your AI’s search capabilities? Here’s where to find the finest historical data:

1. Academic & Research Databases: Loaded with scholarly articles, research studies, and scientific findings, these databases like JSTOR, arXiv, PubMed are heaven for diverse, high-quality, and trusted data. Their meticulously structured and indexed content provides deep insights and amplifies the contextual understanding of AI.

2. Government & Public Records: Think of National Archives, Census Bureau, or SEC filings. Stacked with credible public records, they offer a real-world reflection of societal changes over the years. This data is potentially invaluable for training AI in understanding patterns and trends.

3. Specialized Industry Repositories: Data pertaining to specific industries like financial market data providers or patent databases not only bolster AI’s domain expertise but also play a pivotal role when training AI for niche applications like market predictions or IP analytics.

4. Curated Digital Libraries & Archives: Picture Project Gutenberg’s collection of classic texts or university digital collections. They present rich linguistic diversity and add layers of semantic depth to AI’s comprehension. Perfect for AI training in areas like natural language processing or historical text analysis.

5. Open-Source Data Initiatives: With platforms like Kaggle and domain-focused GitHub repositories, the possibilities seem endless. Freely accessible, these data sets reduce the cost burden and help democratize AI development, making it feasible for the community at large.

Scouting these sources can lend AI a nuanced understanding of contexts and user intent, surpassing the traditional, keyword bound comprehension. Remember, premium data sets act as the fuel to power up the AI engine – with the right fuel, the journey to better performance becomes smooth and rewarding.

Harnessing Key Tools: Historical Data Curation and Access

True, combing through historical data might feel like an arduous task. Let’s ease that burden with the right tools and platforms designed for data access, cleaning, and curation. For instance, data warehousing solutions come in handy for storing and managing vast datasets. Tools such as Google BigQuery, Amazon Redshift, or Snowflake offer robust storage capabilities paired with analytical functions.

Extraction, Transformation, and Loading (ETL) tools, on the other hand, cleanse and structure raw data, preparing it for effective analysis. Open-source options like Apache NiFi and commercial solutions like Informatica PowerCenter equip you to transform unwieldy historical data into a format your AI can readily digest.

But, what about specific tooling for educational data in AI? The answer lies in platforms like Edubrain. Empowering structured learning and data organization, it prepares data to an instrumental level, especially for educational AI applications, whether they require insights from AI games for kids or frameworks for AI science solvers and AI math solvers. You see, Edubrain organizes learning pathways and problem-solving steps in a structured manner. This translates into a rich source of transactional data that records user engagement patterns and problem-solving approaches.

Hence, whether you’re managing massive historical databases or training an AI to recognize patterns, prudent selection and effective usage of tools can drastically ease your efforts. Tools and platforms simplify the intimidating process of data scouring, offering a path to not only find data but use it efficiently.

Converting Raw Data to AI-Ready Ingredients

Remember baking your first cake? You started with raw ingredients, carefully measured and mixed them. Well, AI search thrives on a similar principle! Fed with raw historical data, it needs structured, cleaned, and optimized information to achieve better understanding. Here’s a crisp breakdown of the transformation process:

- Data Cleaning and Preprocessing: First things first, you must clean up your data. Address missing values, correct inconsistencies, and improve coherence. Imagine sieving flour to rid lumps.

- Normalization and Standardization: Like balancing cake ingredients to achieve the right texture and taste, standardized data brings consistency. It aids machine learning models in making fair and accurate inferences across diverse datasets.

- Feature Engineering: Just as flavors are enhanced with intricate steps like caramelization, creating relevant features from the raw data enhances AI understanding. Drawing additional insights through combinations or scrutinizing specific elements in greater depth helps.

- Indexing and Storage Optimization: This is about efficient retrieval, much like arranging ingredients for easy accessibility. An optimized storage system returns search results swiftly, creating a seamless user interface.

- Version Control: Track changes and modifications to your data over time. It’s akin to noting down improvements to your cake recipe for your next baking session! Version control systems keep a record of amendments made to data, providing transparency and supporting consistency in results.

By following these steps, you can serve up the perfect ‘meal’ for your AI model, priming it for more sophisticated comprehension and search competency. Now, that’s a recipe for success!

Ethical Challenges: Tackling Bias and Privacy in Historical Data Usage

Let’s assume you’re loading up your AI search engine with expansive volumes of historical data, hoping to devise a design closer to natural human functioning. But wait, you need to remember this pivotal aspect–the ethical standpoint. You see, historical datasets come with baggage and might have biases woven into them. Gender biases, racial biases, or even temporal ones may inadvertently make their way into your AI model, skewing search results.

So, how deep does the impact of bias run? Suppose your search engine responds to queries that are more focused toward the male gender. In such a scenario, the AI search model becomes biased and unrepresentative of a diverse user base, damaging the fundamentals of equality.

But biases aren’t the sole culprits in bringing in ethical questions. When delving into historical information, especially personal or sensitive content, privacy concerns pop up. For instance, let’s consider the data compliance laws such as GDPR and CCPA, which highlight the importance of safeguarding user data. Any infringement on the privacy rules would be a direct violation of legal regulations.

So, how could one navigate this labyrinth of ethical and legal constraints? It all starts with a strong strategy aimed at detecting and mitigating biases embedded in your data. A scrutiny of every stage in the data processing pipeline helps. Couple that with constant monitoring and adherence to data compliance guidelines, and you’ve chosen a path that respects ethics and the law. Consider this a critical ‘expert’s warning’ or ‘ethical imperative’ as you venture further into leveraging historical data.

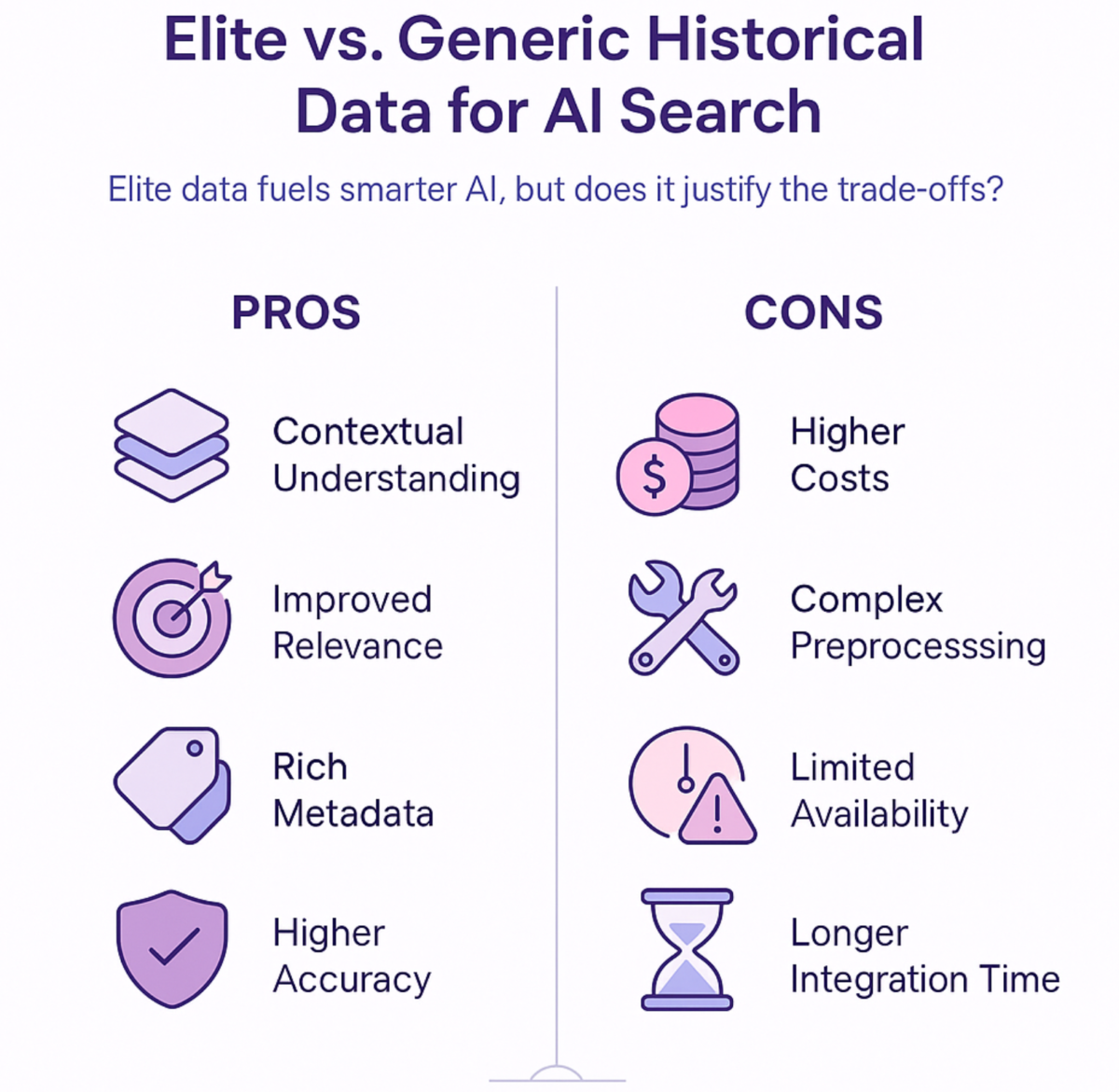

The Balance Sheet: Elite Historical Data’s Impact on AI Search

The allure of tagging a vast expanse of historical data into your AI search model can be compelling. However, it’s crucial to take a balanced view of its advantages and obstacles. Here’s a compiled cheat sheet of the pros and cons for your consideration:

Pros

- Precision Boost: Elite historical data increases the relevance scores of your AI system. Imagine refining your search results so that they resonate more accurately with user queries.

- Contextual Understanding: This wide dataset provides a deeper context, helping your AI system understand nuanced user inquiries.

- Less Hallucination: Hallucinations, where AI invents nonexistent information, get significantly reduced when nourished with rich historical data.

- Fast Results: Want your AI model to converge faster? High-quality historical data might give it a speed boost.

- Improved Long-tail Query Handling: Comprehensive data aids in handling specific, longer-tail user queries more effectively.

Cons

- Pricy Acquisition: Top-notch historical data can be pricey to obtain. Developing an exhaustive library of information comes at a cost.

- Complex Preprocessing: You might have to roll up your sleeves for intricate preprocessing. The extensive data requires proper cleaning and prepping before it’s AI-ready.

- Bias Landmines: High-performing data can hold subtle biases. Expert oversight is necessary to keep bias at bay and ensure neutral application.

- Storage Struggles: A vast pool of data? You’ll need plenty of storage space for that. Consider the additional capacity and how that may escalate costs.

Identifying the rewards and pitfalls of using elite historical data can ensure you approach AI search development with a sharpened perspective. Keeping these guides in your back pocket might just help you strike the right balance to successfully deploy your AI search engine.

The New Era: Reinventing AI Search with High Grade Historical Data

Old data sets and generic information are slowly being pushed to the backburner. Instead, it’s high-value historical data that now takes center stage, defining the future of AI search. The journey involves tireless efforts in sourcing, refining, and ensuring responsible utilization of these datasets, each step as significant as the other.

By breathing vibrancy into archaic knowledge with conscientious engineering, we can give AI search engines the keys to comprehend and explore our shared human knowledge. This path we tread opens the door to an exciting evolution, a future where your AI can echo the richness and complexity of human understanding within search results.